Artificial intelligence has rapidly evolved from text-only systems into models that can understand and generate multiple forms of content. While early AI systems focused primarily on language or vision in isolation, modern AI applications increasingly rely on multimodal models that can process and reason across text, images, and other data types.

GLM-Imagerepresents an important step in this evolution. It is a multimodal image model designed to unify text understanding, image generation, and visual reasoning within a single system, making it especially suitable for real-world AI products and enterprise applications.

This article offers a complete introduction to GLM-Image, explaining what it is, how it works, what problems it solves, and why it matters for developers, designers, and businesses building AI-powered solutions.

What Is GLM-Image?

GLM-Image is a multimodal image generation and understanding model built as part of the broader GLM (General Language Model) ecosystem. As the official model showcased on the GLM-Image website, it goes beyond traditional image generation systems that focus solely on producing images from text prompts.

At its core, GLM-Image connects language and vision through a shared semantic representation. This enables the model to not only generate images from text but also interpret visual content, reason over image–text pairs, and support more advanced multimodal workflows.

The core capabilities of GLM-Image include:

Text-to-Image Generation – Creating images from natural language descriptions

Image Understanding – Analyzing and describing visual content

Multimodal Reasoning – Combining text and images to produce coherent outputs

One of the most notable aspects of GLM-Image is its strong native support for Chinese language prompts, allowing it to understand cultural context, stylistic expressions, and abstract concepts that are often challenging for English-centric models.

Why Multimodal Image Models Matter

Traditional AI pipelines typically separate language processing and image processing into different systems. While this approach works for simple tasks, it limits the ability of AI systems to reason holistically about complex, real-world inputs.

Multimodal image models like GLM-Image solve this limitation by learning joint representations of text and images. This unified approach enables AI systems to perform tasks that were previously difficult or impossible, such as:

Generating images that precisely match complex textual intent

Understanding diagrams, posters, or screenshots and explaining them in natural language

Editing images based on detailed textual instructions

Supporting AI agents that can read, see, and reason at the same time

As a result, multimodal models are becoming foundational components for AI assistants, creative tools, enterprise automation systems, and intelligent agents.

Core Capabilities of GLM-Image

1. Text-to-Image Generation

Text-to-image generation is one of the most widely used features of GLM-Image. The model can generate visually coherent images based on natural language prompts that describe not only objects and scenes but also artistic styles, moods, and cultural elements.

Prompts can include:

Visual styles such as realistic, illustration, ink painting, or cinematic lighting

Scene composition, including foreground, background, and perspective

Emotional tone and atmosphere

Cultural and artistic references

Because GLM-Image is trained with strong multilingual and Chinese-first data alignment, it performs especially well when prompts contain Chinese aesthetic concepts or culturally specific language.

This capability makes GLM-Image particularly useful for creative professionals, marketing teams, and product designers who need high-quality visuals generated directly from text. More examples and demos can be found on the official GLM-Image site.

2. Image Understanding

GLM-Image is not limited to image generation. It can also analyze and interpret existing images, converting visual information into structured or natural language outputs.

Image understanding tasks include:

Describing the content of an image

Identifying objects, scenes, and relationships

Summarizing posters, illustrations, or design layouts

Supporting image-based question answering

These capabilities are essential for applications such as visual search, document understanding, content moderation, and intelligent customer support systems.

3. Multimodal Editing and Reasoning

A key advantage of GLM-Image is its support for multimodal workflows where text and images are used together. This enables more precise and controllable creative processes.

Typical use cases include:

Editing an image by modifying the background while preserving the main subject

Changing style, color palette, or lighting through text instructions

Generating multiple image variations based on additional context

By combining language understanding with image generation, GLM-Image enables interactive workflows that go beyond one-shot image creation.

How Does GLM-Image Work?

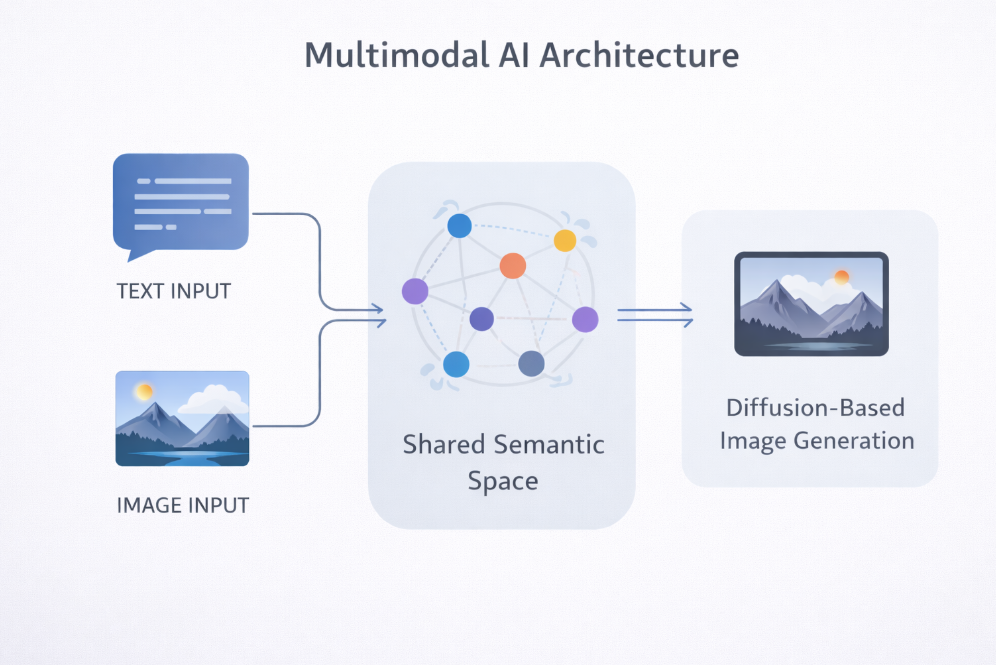

From a technical perspective, GLM-Image combines two major AI paradigms: Transformer-based language modeling and diffusion-based image generation.

In simplified terms, the process works as follows:

Text input (and optionally image input) is encoded into a shared semantic space

This semantic representation conditions a diffusion process

The diffusion model iteratively refines noise into a coherent image

The final image reflects both visual quality and semantic alignment

The key innovation lies in the shared semantic space, which ensures that visual outputs remain closely aligned with the original textual intent.

Final Thoughts

GLM-Image represents a new generation of multimodal image models that go beyond simple text-to-image generation. By aligning language and vision within a shared semantic framework, it enables richer, more controllable, and more intelligent AI systems.

To explore demos, examples, and future updates, you can visit the official website: https://www.glmimage1.com.