A new shift is happening in AI, moving from simple image generation to cognitive generative systems that can understand dense information, interpret structured text, and render visuals that faithfully reflect knowledge.

What is Cognitive Generative AI

Instead of treating images as textures or styles, cognitive generative models aim to capture meaning, relationships, and logical structure before drawing anything. This approach allows AI to generate visuals that reflect the intent and knowledge within the prompt, not just its aesthetic cues.

GLM-Image stands out as an important exploration of what cognitive generative AI can achieve. It addresses one of the most overlooked challenges in image modeling today:

how to transform text-dense, knowledge-rich, and instruction-heavy input into clear, high-fidelity visuals.

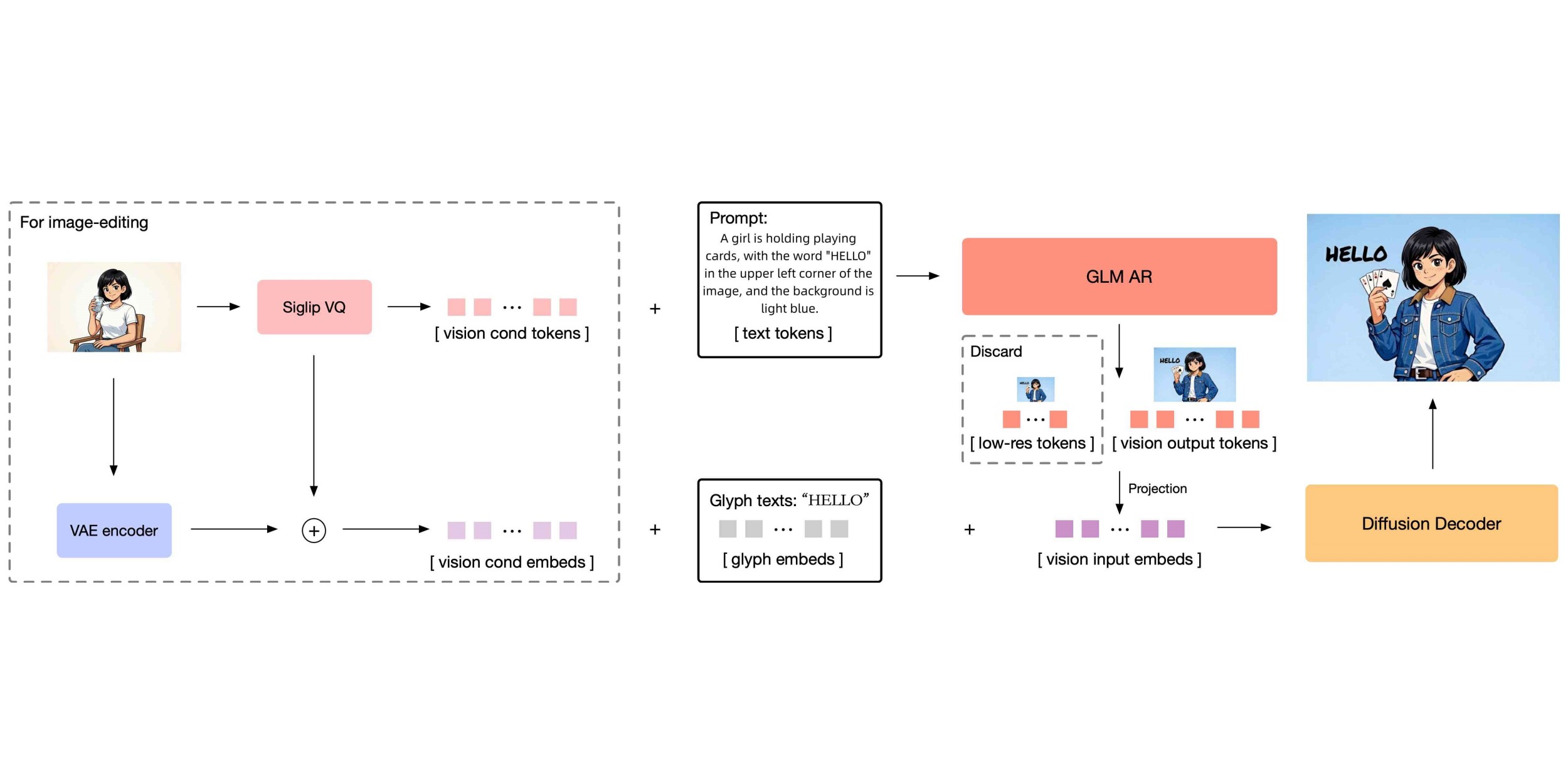

Built within the Z.ai model family, GLM-Image combines deep text understanding, structured semantic reasoning, and precise diffusion-based rendering into a single cohesive framework. To understand why GLM-Image performs so well on these tasks, we need to look more closely at how it works beneath the surface.

What Makes GLM-Image Work Differently From Traditional AI Image Models?

GLM-Image works differently because it separates semantic understanding from visual rendering, ensuring both meaning and aesthetics are handled with equal seriousness.

Most AI image models attempt to interpret text and generate visuals within the same step. This often leads to misinterpretation, beautiful images that fail to follow instructions, or correctly structured images that lack detail and polish.

GLM-Image’s core philosophy is different: Think first, generate second.

Reasoning and rendering are independent tasks

so neither gets compromised.Knowledge understanding is treated as a primary capability, not an afterthought.

This is why GLM-Image excels where many models struggle: scientific diagrams, labeled illustrations, posters with structured text, knowledge-dense visuals, academic content, and text-intensive layouts.

How Does GLM-Image Understand Complex Instructions and Deep Knowledge?

GLM-Image relies on a 9B autoregressive reasoning model that interprets dense prompts, breaks down relationships, and extracts semantic structure before rendering begins.

This is the cognitive engine behind the system. It gives GLM-Image the ability to:

understand multi-step instructions

follow structural constraints

parse scientific or educational text

interpret text-heavy prompts

maintain factual and logical relationships

differentiate between visual elements and textual content

Autoregressive Reasoning as the First Step

The model processes input token by token, building an internal representation that includes:

conceptual hierarchy

spatial and structural cues

labels, annotations, and text blocks

relationships between components

This gives GLM-Image a level of precision and instruction-following rarely seen in image generation.

Semantic VQ Tokenization

Another key component is semantic tokenization, which helps the model retain meaning when converting text and conceptual descriptions into structured internal representations.

This combination is what allows GLM-Image to operate more like a scientific illustrator than a pure diffusion model.

How Does GLM-Image Generate High-Fidelity, Text-Dense Images?

Once reasoning is complete, GLM-Image uses a 7B DiT diffusion decoder to render crisp images with clean text, accurate structure, and stable visual layouts.

7B DiT Diffusion Decoder for Visual Precision

This component focuses on:

high-fidelity details

smooth gradients and textures

realistic lighting

consistent geometry

typography alignment

multi-panel uniformity

overall visual coherence

Built-In Text Layout Rendering

One of GLM-Image’s most distinctive strengths is its ability to maintain:

aligned labels

well-placed annotations

coherent paragraph blocks

diagram-friendly layouts

typographic consistency

This makes GLM-Image uniquely capable of producing text-dense, knowledge-rich images that remain clean and legible.

Unlike most diffusion models, GLM-Image doesn’t treat text as noise or texture, it treats it as information that must remain readable.

What Are the Best Use Cases for GLM-Image?

GLM-Image shines in use cases where Meaning + Clarity + Aesthetics must coexist.

1.Scientific & Educational Illustrations

GLM-Image interprets scientific concepts and renders them with accurate structure and readable labels.

biology diagrams

physics concepts

step-by-step process visuals

labeled charts

data-rich explanatory graphics

Advantage: reasoning ensures scientific relationships stay correct, while rendering keeps text clear and diagrams consistent.

2.Commercial Posters & Marketing Materials

Professional layouts require balanced typography and precise visual hierarchy, both areas where GLM-Image performs well.

text-heavy poster designs

structured layout compositions

branded graphics

academic conference posters

Advantage: the model preserves structured layouts and delivers high-quality graphics that remain informational, not chaotic.

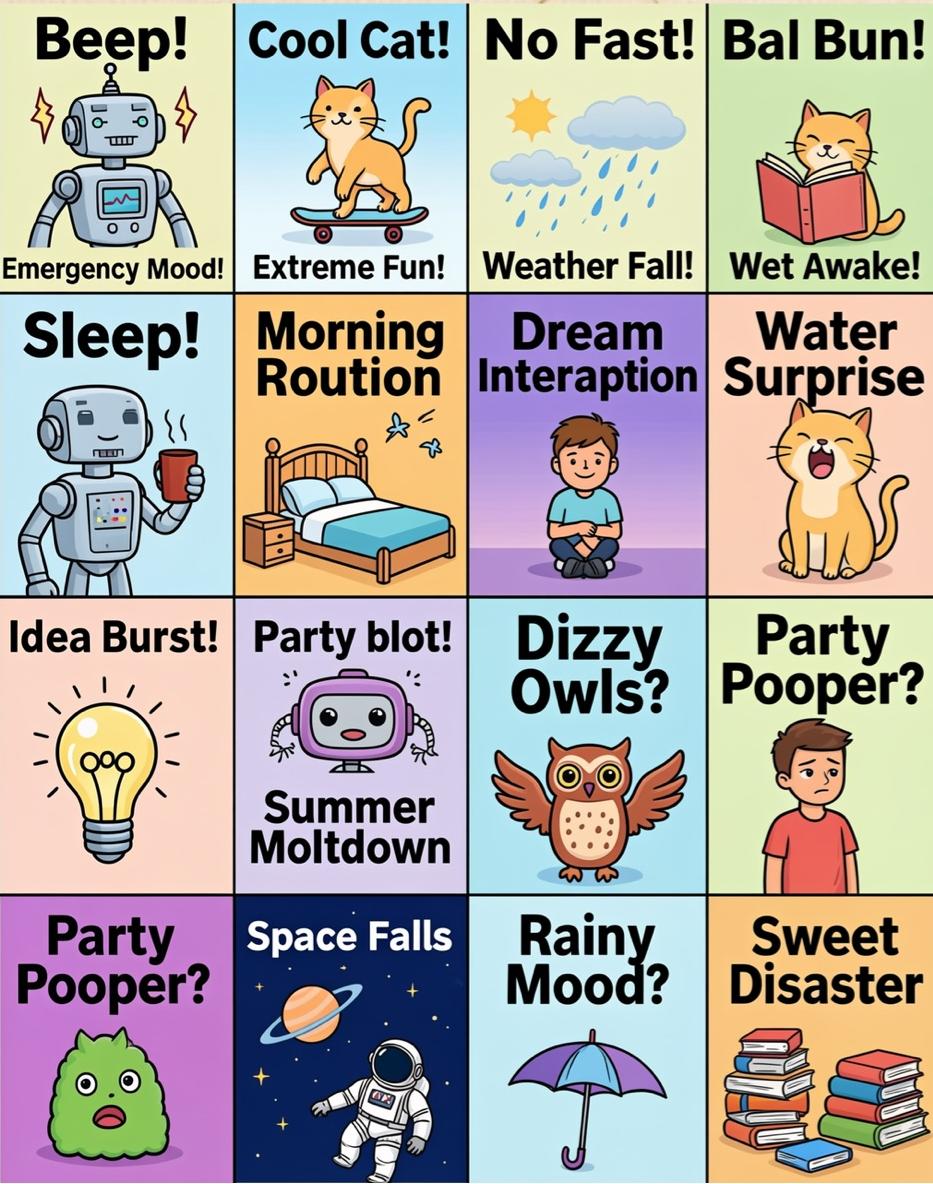

3.Social Media Content

GLM-Image transforms complex ideas into clean, shareable layouts optimized for visual platforms.

infographic-style posts

text-image social graphics

educational summaries

visually organized knowledge snippets

Advantage: cognitive reasoning enables clear messaging, reducing clutter and improving readability for fast-scrolling audiences.

4.E-commerce Displays

E-commerce visuals depend on consistency, layouts, and structured comparisons, perfect for GLM-Image.

multi-panel product layouts

consistent product showcases

structured comparison images

Advantage: the model maintains uniform style and clean typographic placement across multiple images.

5.Realistic & Artistic Creation

GLM-Image supports varied styles without losing fidelity.

style-specific visuals

artistic explorations

concept art

highly controlled compositions

Advantage: GLM-Image ensures rich textures and stable compositions across creative outputs.

GLM-Image and the Future of Cognitive Generative AI

GLM-Image represents an early step toward broader cognitive generative systems, AI models that reason, interpret, and generate in a unified manner.

As research advances, future improvements may include:

richer cross-modal reasoning (text + image + structure)

enhanced long-text processing

more accurate diagram synthesis

higher-resolution rendering

stronger multimodal consistency

AI models will move beyond “drawing from prompts” toward representing human knowledge visually.

GLM-Image shows how separating semantic understanding from visual rendering can unlock new possibilities in AI image generation. Its cognitive generative design—reasoning first, rendering second—allows it to handle text-dense, knowledge-rich, and instruction-heavy tasks with unusual clarity and high fidelity.

If cognitive generative AI interests you, or if you’re curious how complex knowledge becomes clear imagery, explore What is GLM-Image 📑 and try GLM-Image generator 🔗 to experience it for yourself.

Reference: GLM-Image: Auto-regressive for Dense-knowledge and High-fidelity Image Generation